NNEDV Resource Highlight: Staying Safe on Facebook

/On April 5, 2017, Facebook announced that it would apply photo-matching software to help stop the spread of “revenge porn” – which is a pervasive crime that occurs across social media channels and the internet (however, we prefer the more accurate term “non-consensual sharing of intimate images”). We are proud to have helped advise Facebook in the development and rollout of these new tools to stop the spread of non-consensual sharing of intimate images on Facebook platforms. Read more about these new tools here.

In May 2017, Facebook also added another level of defense against improper content. 3000 new employees were hired to review content such as hate speech, child exploitation, and“revenge porn.” Facebook currently has nearly 2 billion users, which makes the process of reviewing and removing inappropriate content an enormous job. The company has expressed a hope that the added employees will help to more quickly remove information that is disallowed by Facebook policies. Read more about the new employees here. More information about how to make a report of inappropriate content can be found here.

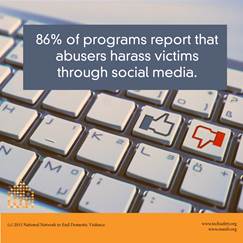

Since 2009, Safety Net has worked with Facebook to help improve safety considerations and increase survivors’ ability to safely utilize the platform. We believe that survivors have the right to remain connected to their friends and loved ones and that everyone deserves to be safe at home, at work, on the street, and online.

Learn more about staying safe on Facebook:

« Safety & Privacy on Facebook: A Guide for Survivors of Abuse is available in English, Spanish (Latin America), French, Arabic, and more

« Our quick Guide to Staying Safe on Facebook is currently available in English

« Review additional tools and resources for survivors in our toolkit, Technology Safety & Privacy: A Toolkit for Survivors

If you have additional questions about helping survivors stay safe on social media – or any other technology safety questions, please reach out to our Safety Net team: safetynet@nnedv.org.